1 概述

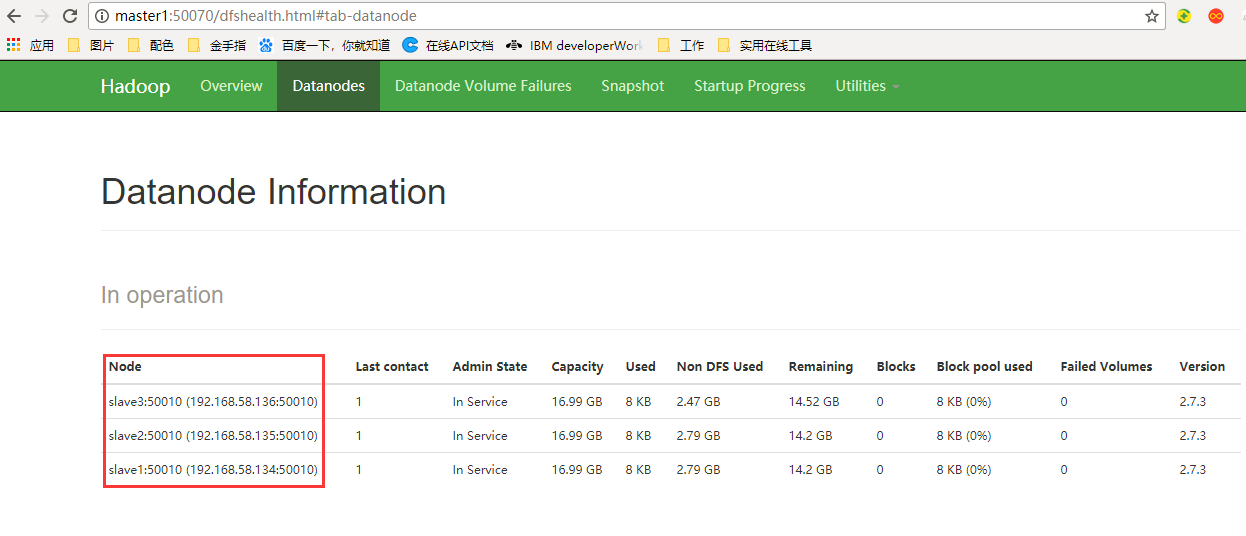

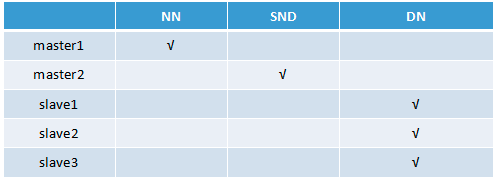

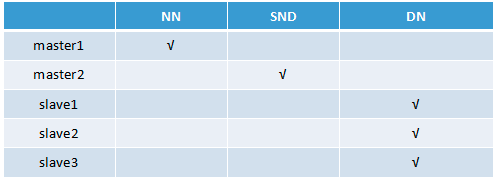

本文章介绍大数据平台Hadoop的分布式环境搭建、以下为Hadoop节点的部署图,将NameNode部署在master1,SecondaryNameNode部署在master2,slave1、slave2、slave3中分别部署一个DataNode节点

NN=NameNode(名称节点)

SND=SecondaryNameNode(NameNode的辅助节点)

DN=DataNode(数据节点)

2 前期准备

(1)准备五台服务器

如:master1、master2、slave1、slave2、slave3

(2)关闭所有服务器的防火墙

$ systemctl stop firewalld $ systemctl disable firewalld

(3)分别修改各服务器的/etc/hosts文件,内容如下:

192.168.56.132 master1 192.168.56.133 master2 192.168.56.134 slave1 192.168.56.135 slave2 192.168.56.136 slave3

注:对应修改个服务器的/etc/hostname文件,分别为 master1、master2、slave1、slave2、slave3

(4)分别在各台服务器创建一个普通用户与组

$ groupadd hadoop #增加新用户组 $ useradd hadoop -m -g hadoop #增加新用户 $ passwd hadoop #修改hadoop用户的密码

切换至hadoop用户:su hadoop

(5)各服务器间的免密码登录配置,分别在各自服务中执行一次

$ ssh-keygen -t rsa #一直按回车,会生成公私钥 $ ssh-copy-id hadoop@master1 #拷贝公钥到master1服务器 $ ssh-copy-id hadoop@master2 #拷贝公钥到master2服务器 $ ssh-copy-id hadoop@slave1 #拷贝公钥到slave1服务器 $ ssh-copy-id hadoop@slave2 #拷贝公钥到slave2服务器 $ ssh-copy-id hadoop@slave3 #拷贝公钥到slave3服务器

注:以上操作需要登录到hadoop用户操作

(6)下载hadoop包,hadoop-2.7.5.tar.gz

官网地址:https://archive.apache.org/dist/hadoop/common/hadoop-2.7.5/

3 开始安装部署

(1)创建hadoop安装目录

$ mkdir -p /home/hadoop/app/hadoop/{tmp,hdfs/{data,name}}

(2)将安装包解压至/home/hadoop/app/hadoop下

$tar zxf tar -zxf hadoop-2.7.5.tar.gz -C /home/hadoop/app/hadoop

(3)配置hadoop的环境变量,修改/etc/profile

JAVA_HOME=/usr/java/jdk1.8.0_131 JRE_HOME=/usr/java/jdk1.8.0_131/jre HADOOP_HOME=/home/hadoop/app/hadoop/hadoop-2.7.5 PATH=$PATH:$JAVA_HOME/bin:$JRE_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin export PATH

(4)刷新环境变量

$source /etc/profile

4 配置Hadoop

(1)配置core-site.xml

$ vi /home/hadoop/app/hadoop/hadoop-2.7.5/etc/hadoop/core-site.xml

<configuration> <property> <!-- 配置HDFS的NameNode所在节点服务器 --> <name>fs.defaultFS</name> <value>hdfs://master1:9000</value> </property> <property> <!-- 配置Hadoop的临时目录 --> <name>hadoop.tmp.dir</name> <value>/home/hadoop/app/hadoop/tmp</value> </property> </configuration>

默认配置地址:http://hadoop.apache.org/docs/r2.7.5/hadoop-project-dist/hadoop-common/core-default.xml

(2)配置hdfs-site.xml

$ vi /home/hadoop/app/hadoop/hadoop-2.7.5/etc/hadoop/hdfs-site.xml

<configuration> <property> <!-- 配置HDFS的DataNode的备份数量 --> <name>dfs.replication</name> <value>3</value> </property> <property> <name>dfs.namenode.name.dir</name> <value>/home/hadoop/app/hadoop/hdfs/name</value> </property> <property> <name>dfs.datanode.data.dir</name> <value>/home/hadoop/app/hadoop/hdfs/data</value> </property> <property> <!-- 配置HDFS的权限控制 --> <name>dfs.permissions.enabled</name> <value>false</value> </property> <property> <!-- 配置SecondaryNameNode的节点地址 --> <name>dfs.namenode.secondary.http-address</name> <value>master2:50090</value> </property> </configuration>

默认配置地址:http://hadoop.apache.org/docs/r2.7.5/hadoop-project-dist/hadoop-hdfs/hdfs-default.xml

(3)配置mapred-site.xml

$ cp /home/hadoop/app/hadoop/hadoop-2.7.5/etc/hadoop/mapred-site.xml.template /home/hadoop/app/hadoop/hadoop-2.7.5/etc/hadoop/mapred-site.xml $ vi /home/hadoop/app/hadoop/hadoop-2.7.5/etc/hadoop/mapred-site.xml

<configuration> <property> <!-- 配置MR运行的环境 --> <name>mapreduce.framework.name</name> <value>yarn</value> </property> </configuration>

默认配置地址:http://hadoop.apache.org/docs/r2.7.5/hadoop-mapreduce-client/hadoop-mapreduce-client-core/mapred-default.xml

(4)配置yarn-site.xml

$ vi /home/hadoop/app/hadoop/hadoop-2.7.5/etc/hadoop/yarn-site.xml

<configuration> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <property> <!-- 配置ResourceManager的服务节点 --> <name>yarn.resourcemanager.hostname</name> <value>master1</value> </property> <property> <name>yarn.resourcemanager.address</name> <value>master1:8032</value> </property> <property> <name>yarn.resourcemanager.webapp.address</name> <value>master1:8088</value> </property> </configuration>

默认配置地址:http://hadoop.apache.org/docs/r2.7.5/hadoop-yarn/hadoop-yarn-common/yarn-default.xml

(5)配置slaves

$ vi /home/hadoop/app/hadoop/hadoop-2.7.5/etc/hadoop/slaves

slave1 slave2 slave3

slaves文件中配置的是DataNode的所在节点服务

(6)配置hadoop-env

修改hadoop-env.sh文件的JAVA_HOME环境变量,操作如下:

$ vi /home/hadoop/app/hadoop/hadoop-2.7.5/etc/hadoop/hadoop-env.sh

export JAVA_HOME=/usr/java/jdk1.8.0_131

(7)配置yarn-env

修改yarn-env.sh文件的JAVA_HOME环境变量,操作如下:

$ vi /home/hadoop/app/hadoop/hadoop-2.7.5/etc/hadoop/yarn-env.sh

export JAVA_HOME=/usr/java/jdk1.8.0_131

(8)配置mapred-env

修改mapred-env.sh文件的JAVA_HOME环境变量,操作如下:

$ vi /home/hadoop/app/hadoop/hadoop-2.7.5/etc/hadoop/mapred-env.sh

export JAVA_HOME=/usr/java/jdk1.8.0_131

(9)将master1中配置好的hadoop分别远程拷贝至maser2、slave1 、slave2、slave3服务器中

$ scp -r /home/hadoop/app/hadoop hadoop@master2:/home/hadoop/app/ $ scp -r /home/hadoop/app/hadoop hadoop@slave1:/home/hadoop/app/ $ scp -r /home/hadoop/app/hadoop hadoop@slave2:/home/hadoop/app/ $ scp -r /home/hadoop/app/hadoop hadoop@slave3:/home/hadoop/app/

5 启动测试

(1)在master1节点中初始化Hadoop集群

$ hadoop namenode -format

(2)启动Hadoop集群

$ start-dfs.sh $ start-yarn.sh

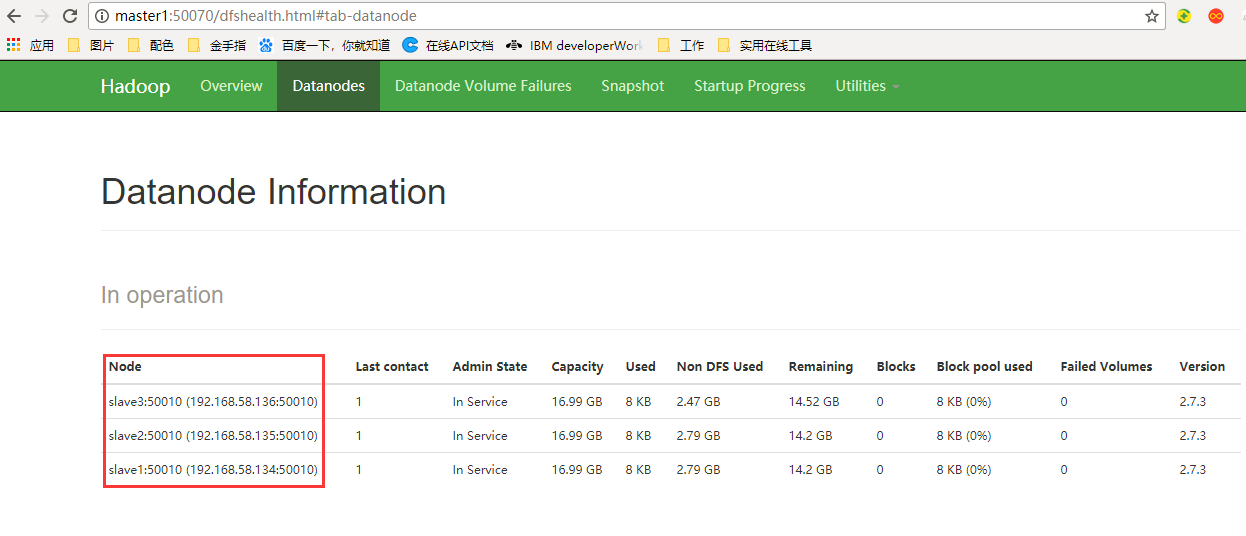

(3)验证集群是否成功

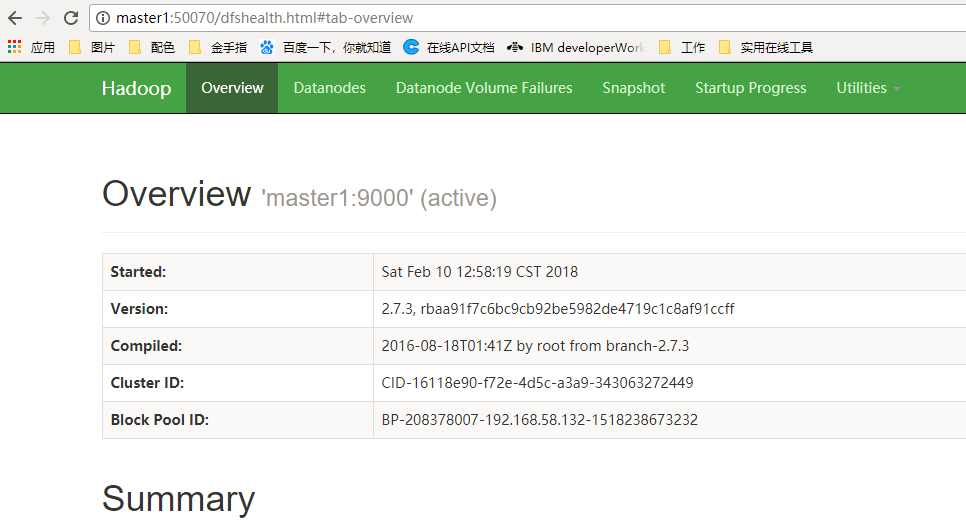

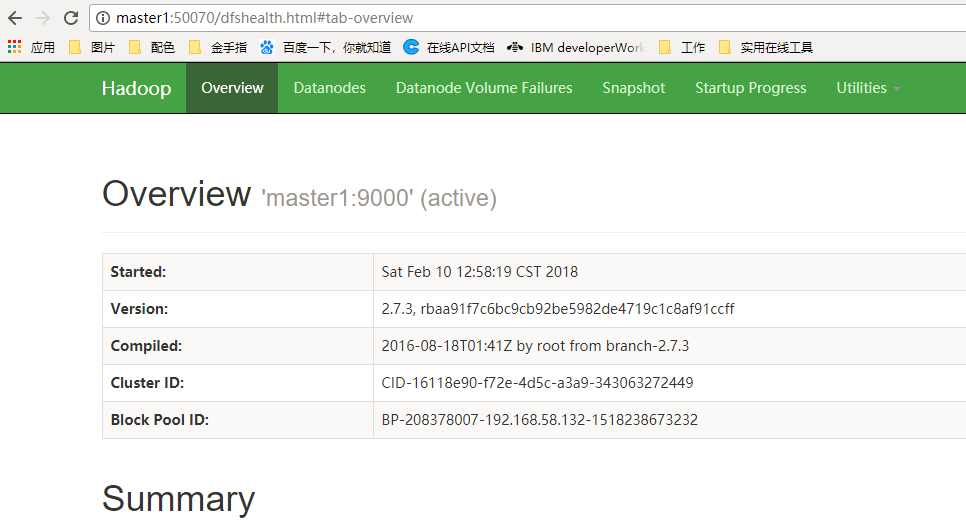

浏览器中访问50070的端口,如下证明集群部署成功